Compliance with the AI Act, implemented with rigour.

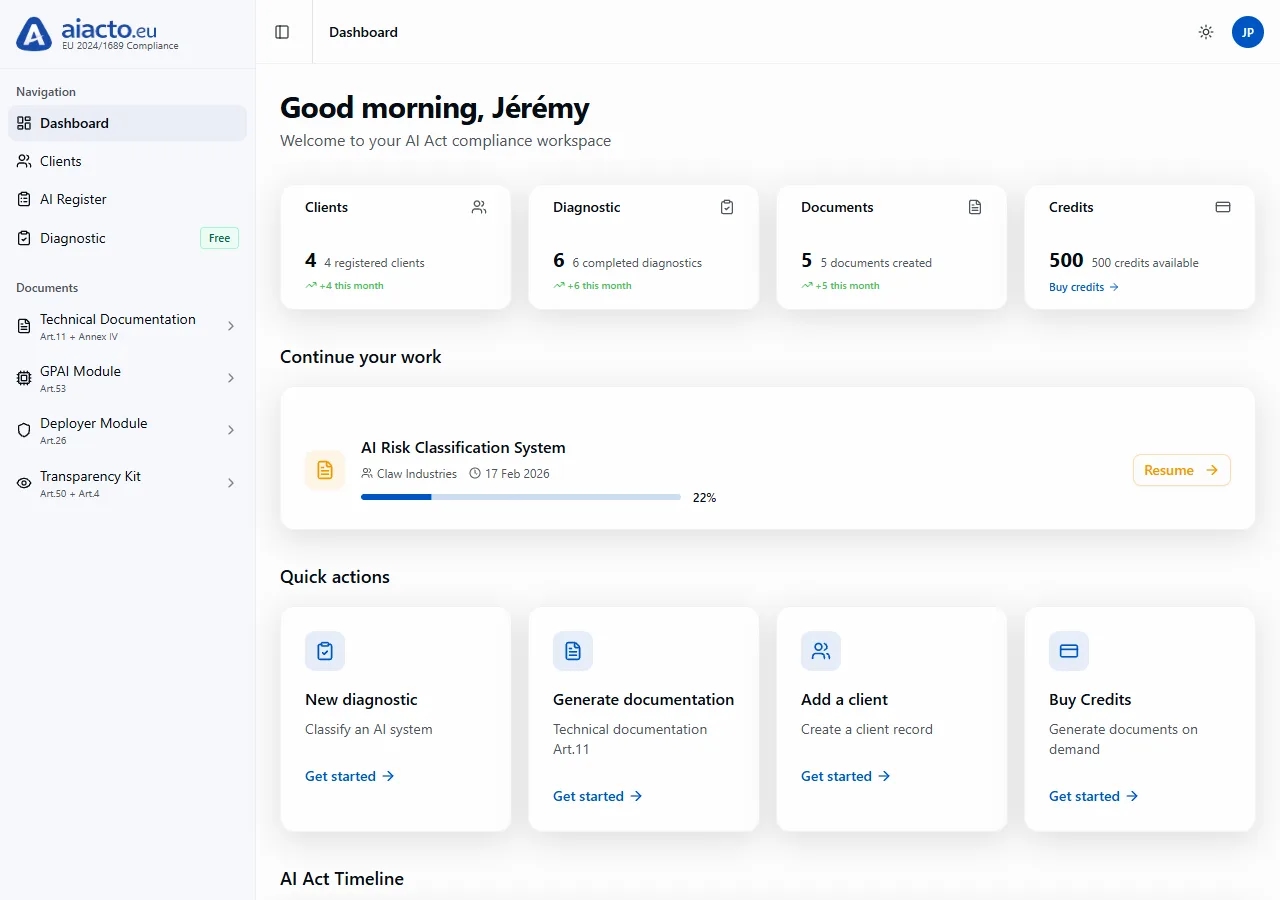

The French platform that structures, documents and steers your compliance with Regulation (EU) 2024/1689. Diagnostic, Article 11 documentation, human oversight, all on a single audit-ready workflow.

The AI Act is coming into force. Obligations are piling up.

European Regulation 2024/1689 imposes a cascade of obligations, including technical documentation, human oversight, risk management and transparency, with penalties of up to 7% of global turnover.

Compliance teams have fewer than 19 months to structure their response before the "high-risk" phase of December 2027.

Read our analysis of the regulationThree modules, one continuous regulatory thread.

Each module produces a defensible deliverable. Reasoned diagnostic, Article 11 documentation, Article 26 register. Reviewed by your team before sign-off.

Reasoned diagnostic

Identify the risk level, regulatory role (provider, deployer, importer, distributor) and the full list of your obligations in under 5 minutes.

Article 11 documentation

Draft the 14 sections of Annex IV with an assistant that proposes and you validate. Every paragraph is referenced to its source article.

Multi-client management

For DPOs and consultancies: centralise the register of AI systems, the history of diagnostics and client validations in a single workspace.

A four-step methodology, aligned with the requirements of the regulation.

From system classification to the final audit file, every deliverable is produced in the order expected by supervisory authorities.

Identify the risk level

Is the system prohibited, high-risk, limited-transparency or minimal? The guided diagnostic decides in 12 structured questions.

Annex III · Article 6Draft the technical documentation

For each high-risk system, produce the 14 sections of Annex IV with the assistant. You validate every paragraph.

Article 11Have it reviewed by a DPO or counsel

Send a secure link to your external reviewer. They comment, approve or request changes, without creating an account.

Article 14Retain for audit

Every version is timestamped and kept for 10 years. PDF export, Article 26 register and audit trail ready to transmit.

Article 12 · 18Four regulatory roles, distinct obligations.

The regulation does not treat all actors in the AI chain the same way. Identifying your role, and that of your partners, is the first step of any compliance work.

Review obligations by article"Places on the market or puts into service an AI system in the Union, whether for payment or free of charge."

"Uses an AI system in the course of a professional activity, under its own authority."

"Places on the market an AI system bearing the name of an entity established outside the Union."

"Makes an AI system available without modifying its essential properties."

A French platform, designed for European compliance requirements.

Sovereign hosting, end-to-end encryption, immutable logging and full transparency on the AI models used. Our infrastructure meets the strictest requirements of regulated sectors.

For a European retail group, structuring documentation for 23 AI systems including 9 high-risk ones takes around four months on aiacto, versus two years in an external consultancy, for a budget six times lower.

Five key milestones to know.

Regulation (EU) 2024/1689 enters into application in successive stages until 2028 (omnibus timeline).

Prohibited practices + AI Literacy

AI systems with unacceptable risk are prohibited. Mandatory team training.

GPAI obligations

General-purpose AI models: documentation, transparency on training.

Art. 50 transparency

Transparency obligations for generated content and systems interacting with persons.

High-risk systems (Annex III)

Full application to high-risk systems listed in Annex III of the Regulation.

Regulated products (Annex I)

Extension to AI systems embedded in products already covered by sectoral legislation.

Start free, scale as your needs grow.

Prices in euros, excluding tax. Monthly payment with no commitment. Cancel any time.

To evaluate the platform and run a first diagnostic.

For providers of AI systems.

For providers of general-purpose AI models.

Multi-client, SSO, SLA and dedicated support.

Everything you need to know about the AI Act.

Still a question without an answer? Our team replies within 24 working hours.

Structure your AI Act compliance today.

Three minutes for the diagnostic. Three free credits on sign-up. No credit card. Real-time response.

Start the free diagnostic